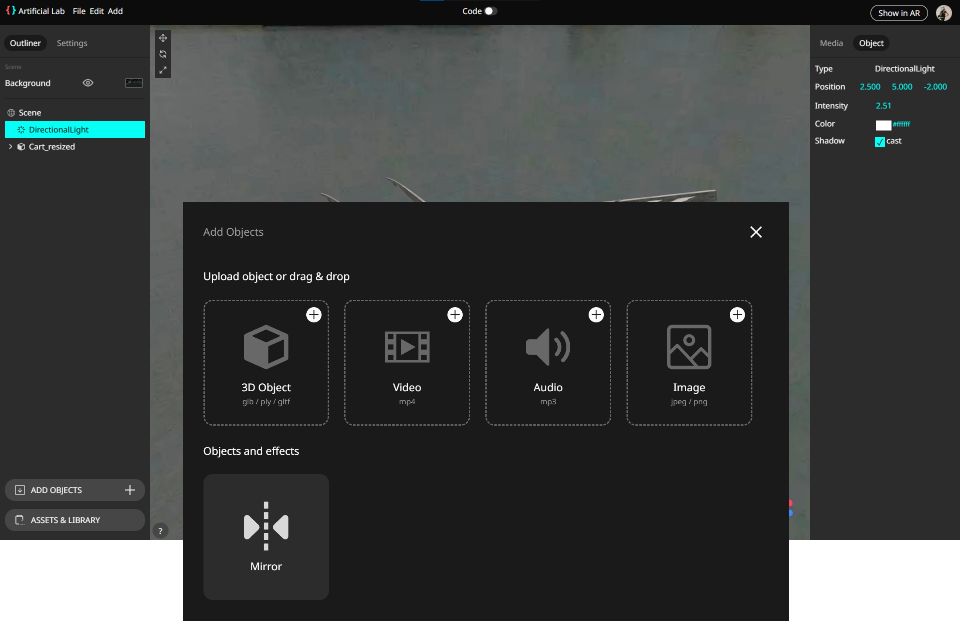

Artificial LAB Browser based

3D Editor

The Artificial LAB is the core, browser-based editor for the Artificial Museum Framework. This community-developed infrastructure empowers artists, curators, and activists to easily deploy AR artifacts.

Direct 3D Asset Integration

The editor provides a seamless pathway for integrating standard 3D assets into your scene, offering precise controls to set the position, scale, and rotation of your objects for real-time spatial editing. This simplified pipeline, often leveraging techniques used in Three.js, allows non-developers to achieve high-fidelity 3D rendering in a performant, web-native environment, enabling immediate, on-site creation within the AR experience.

Time-Based Media and Spatial Audio

Moving beyond simple playback, the system treats video and sound as true spatial elements in the scene graph: audio is positioned spatially, with volume and direction reacting dynamically to the user's movement and distance. Furthermore, video textures can be attached directly to objects or surfaces, allowing media to fully integrate into the 3D environment and encouraging physical exploration to uncover the depth of the art.

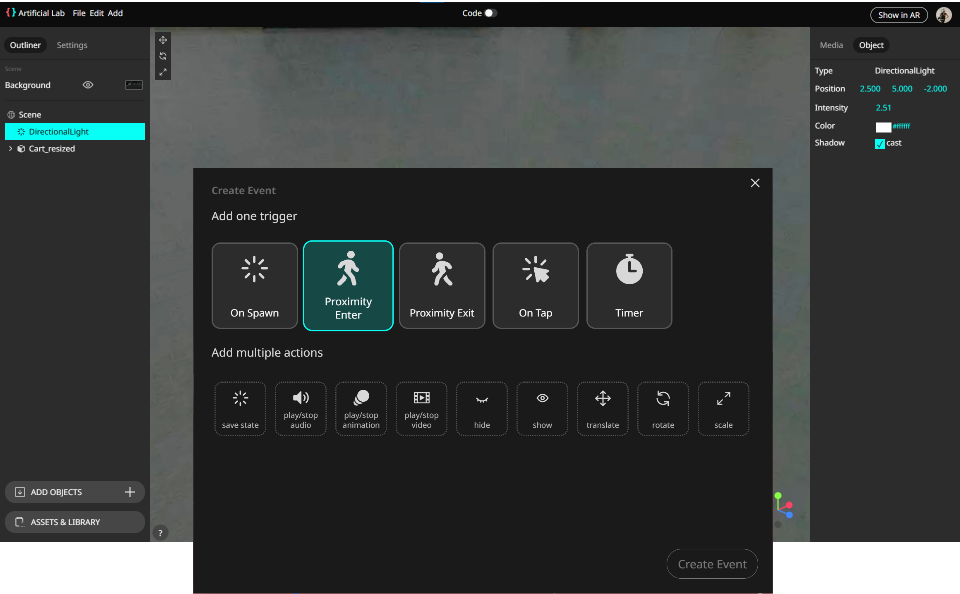

Modular Interactivity and Logic

The LAB offers a no-code system for building complex, event-driven narratives: modular Triggers (e.g., on enter/exit, on distance, on click) and Actions (e.g., play animation, change object visibility, translate into space). This provides a visual scripting layer for interaction design, opening up game-like mechanics and subtle, responsive art without requiring proficiency in code.